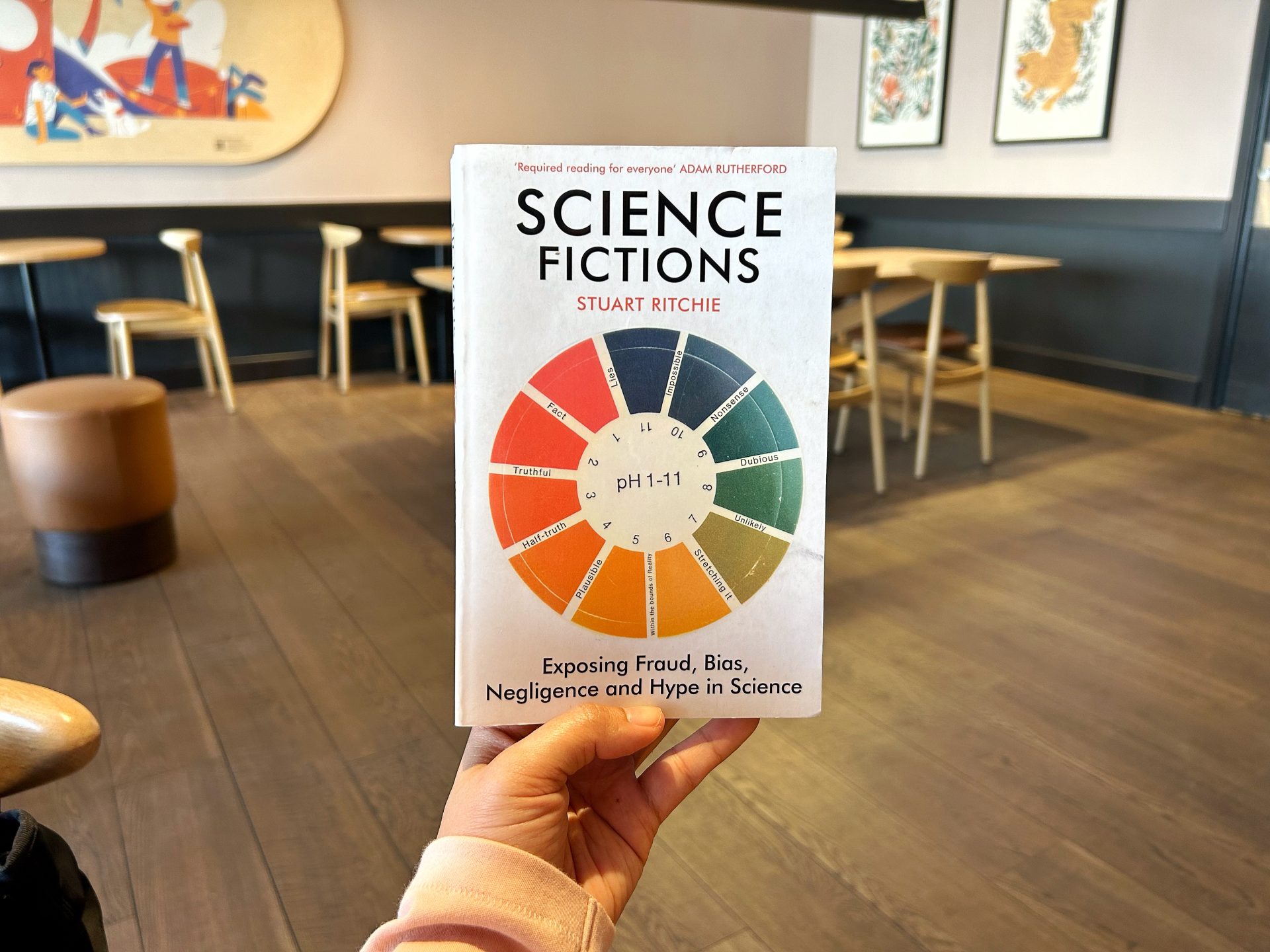

Science is a powerful tool to shape our understanding of the world and guide us towards informed decision-making. It offers a path to truth by meticulously investigating hypothesis, performing experiments, and subjecting findings to rigorous scrutiny. However, the scientific process frequently intersects with the intricate politics and hidden agendas, thereby creating an environment conducive to fraudulent practices, placing our trust on science in what can only be described as sheer nonsense.

In Science Fiction, Stuart Ritchie reveals behind the scenes of groundbreaking scientific discoveries, peeling back the curtain to expose the hidden faults and flaws lurking in the shadows. Data fraud and manipulation, publication and meaning-well bias, statistical p-hacking, up to over hype a discovery easily slip through the cracks, presenting themselves as the truth we seek. As we peruse the pages of this book, we will increasingly feel disheartened at the realization that our belief in science can sometimes rest upon nothing more than utter falsehoods.

The less acquainted you are with the intricate world of research and academia, the more profound the importance of this book becomes as an essential read. Its pages unfold a public secret that remains exclusive knowledge among scientists.

This book sheds light on the vulnerabilities of the scientific system, so that we can equip ourselves with the critical thinking necessary to navigate the vast sea of information that surrounds us. By recognizing and addressing the pressing concerns, hopefully, we can distinguish between legitimate scientific breakthroughs and dubious claims, preventing the widespread dissemination of misleading information.

In a thought-provoking conclusion to the book, Ritchie not only shares his invaluable perspective on the perplexing question of how to address the challenges surrounding the integrity of the scientific system but also presents concrete solutions that universities, journals, and funders can implement to mend the very fabric of scientific culture.

Highlights

Mertonian Norms of Science

- Universalism: no matter who comes up with it—any other status of a scientists should have no bearing on how their factual claims are assessed.

- Disinterestedness: scientists aren’t in it for money, for political or ideological reasons, or to enhance their own ego or reputation. They’re in it to advance our understanding of the universe by discovering things and making things.

- Communality: share knowledge with each other.

- Organized scepticism: nothing is sacred, and a scientific claim should never be accepted at face value. We should suspend judgment on any given finding until we’ve properly checked all the data and methodology.

Why do fraudsters commit such brazen acts?

- A desperation for grant money or their funding dried up during the period that they were under investigation

- The fraudster’s pathologically mistaken views on what science is about

- Scientists who commit fraud care too much about the truth, but their idea of what’s true has become disconnected from reality

Scientific fraudsters do grievous and disproportionate damage to science

- The waste of time to investigation

- The waste of money from their research grants

- Demoralising effect on scientists

- Pollutes the scientific literature

- The effects of fraud bleed out far beyond the journals where the fabrications are published

- Practitioners who rely on research can be misled into using treatments or techniques that either don’t work or actively dangerous

- Betrayal of public trust

If you find an effect in an underpowered study, that effect is probably exaggerated.

Stuart Richie, Science Fiction

Publication Bias or File-drawer Problem

- Scientists choose whether to publish study based on their results.

- Results that support a theory written up and submitted to journal with flourish; disappointing ‘failures’ are quietly dropped.

Data Manipulation

p-hacking

- Comes in two types:

- Scientists pursuing a particular hypothesis run and re-run their analysis of an experiment each time in a marginally different way, until chance eventually grants them a p-value below 0.05.

- Taking an existing dataset, running lots of ad hoc statistical test on it with no specific hypothesis in mind, then simply reporting whichever effects happen to get p-values below 0.05 (known as HARKing or Hypothesising After the Results are Known)

The Chrysalis Effect

- Initially ugly sets of findings had often metamorphosed into handsome butterflies, with messy-looking, non-significant results dropped or altered in favour of a clear, positive narrative.

Meaning-well bias

- The bias of a scientist who really wants their study to provide strong results.

Two kinds of scientific negligence

- Unforced errors: due to inattention, oversight, or carelessness.

- When scientists bake errors into the very way their studies are designed due to poor training or sheer incompetence

Three kinds of hype commonly engage in press release

- Unwarranted advice: press release gave recommendations for ways readers should change their behavior that was more simplistic or direct than the results of the study could support.

- Cross-species leap: lots of preclinical medical research is done using non-human animals like rats and mice, and the vast majority of the results don’t end up translating to human beings.

- Correlation is not causation.

Frustratingly, once the hype bubble has been inflated by a press release, it’s difficult to burst.

Stuart Richie, Science Fiction

Salami-slicing

- Taking a set of scientific results, often from a single study, that could have been published together as one paper, and splitting them up into smaller sub-papers, each of which can be published separately

- more sinister purpose than mere CV building → give the impression that there’s stronger support for the efficacy of your drug than if there were just one or two published on it.

The trifecta of salami-slicing, predatory journals, and peer review fraud makes it clear that we shouldn’t be rating scientists on their total number of publications. Alternatives:

- H-index

- Weakness: self-citation, self-plagiarism

- Impact factor

- Weakness:

- Coercive citation: demanding during peer review that authors cite a list of previous papers published in that journal, whether or not they’re strictly relevant to the work at hand

- Citation cartels: backroom agreements are made to cite articles across several different journals

- Weakness:

Ways to alter the culture of science

- Name and shame more of those who have been found to commit scientific misconduct.

- Developing effective algorithms to spot faked data in scientific papers, and others that can detect problems such as image duplication

- Create journals that specialize in publishing null results, providing a more attractive alternative to file-drawer problem, such as The Journal of Negative Results in Biomedicine. OR journals that explicitly accepts any result, providing the study that found it is judged methodologically

- Less focus on statistical significance and clearer about the uncertainty of their findings, reporting instead their margin of error around each number and generally having more humility about what can be derived from often-blurry statistical results, such as using: confidence interval or Bayesian statistics

- To educate scientists more effectively on what it can and can’t show and reform its use so that the mistakes can be avoided

- Take the analysis out of the researchers’ hands → deal with statistical bias and p-hacking

- Pre-registration → allows us to see what hypotheses the researchers intended to test → so we can check if any of them were switched mid-study

- Open Science → every part of the scientific process should be made freely accessible

- Preprints → other scientists will read and comment on the study so that the author can make any relevant tweaks before they submit it to a journal for official publication

Responsible of the main players in science

- Universities

- Hiring based on ‘good scientific citizenship’: not just publication but the complexity of building international collaborations, the arduousness of collecting and sharing data, the honesty of publishing every study whether null or otherwise

- Rewards researchers for working towards a more open and transparent scientific literature

- Value quality over quantity

2. The journals

- Promote openness and replicabilty: explicitly inviting scientists to submit replications, to Pre-register their plans, and to attach their datasets to their paper

- Institute policies of scanning studies for basic errors or employ data integrity officers to do random spot checks using methods like GRIM test

3. The funders

- Require scientists to do more with the money than just publish papers in high-impact journals

- Fund scientists, rather than their specific projects

- Give those researchers who are judged to be sufficiently creative enough money to fund their planned research without constraints and hope that the increased freedom will lead to new and exciting advances

- Create a shortlist of grant applications that are all above a certain quality level and then allocate the funding by lottery → fair to lesser known scientists who may have good ideas but not the network or clout that’s required to be selected for long-term funding; letting random chances do the job saves reviewers the time of attempting the unachievable as well as circumventing biases that might favor certain types of scientists for their seniority, gender, or other characteristics; far less pressure to hype up the importance of their work.

Author: Stuart Richie

Publication Date: 21 July 2020

Publisher: Bodley Head

Number of pages: 368 pages

Leave a Reply